Compiling is the process of converting source code into machine code that can be executed by a computer, while debugging is the process of identifying and fixing errors or bugs in the code.

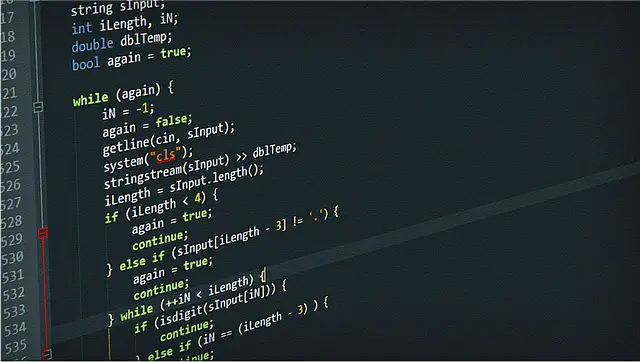

What is debugging?

(Image by Christopher Kuszajewski from Pixabay )

Debugging is the process of finding and fixing bugs, or errors, in software code. It involves identifying the root cause of a problem and then implementing a solution to fix it. Debugging can be done using various tools and techniques such as debuggers, logging, and error messages.

One common tool used for debugging is a debugger program which allows developers to pause the execution of their code at specific points in order to examine its state. This helps them identify where problems are occurring within the code and trace back through its execution path.

Another way to debug code is through logging. Developers can insert statements into their code that log information about what’s happening at different stages during runtime. By looking at these logs, they can gain insights into how their application is behaving and identify any issues that need addressing.

Debugging is an essential part of software development as it enables developers to create more reliable applications by catching errors early on in the development cycle before they become bigger problems down the line.

What is compiling?

(Photo by Lukas)

Compiling is an essential process in software development. It refers to the conversion of source code written in a high-level programming language into machine code that can be executed by a computer. This transformation involves several steps, including lexical analysis, syntax analysis, semantic analysis, and code generation.

The first step of compiling is lexical analysis or tokenizing. During this phase, the compiler breaks down the source code into tokens (words), which are then categorized as keywords, operators or literals. The next step is syntax analysis where the compiler checks if all the tokens follow proper grammar rules.

After successfully passing through those stages comes semantic analysis where programs are checked for any logical errors such as undefined variables and wrong data types used among others. We have code generation where compilers create executable files that computers can understand.

Compiling helps developers identify errors early on so they can address them before their program’s execution time and optimize performance accordingly with it being transformed into machine-readable form for efficient processing by computers.

Debugging Vs. Compiling – Key differences

Debugging and compiling are two essential processes in programming that help to ensure the code works as expected. The key difference between them is their purpose and what they do.

Compiling refers to the process of translating source code into an executable form that can be run on a computer. During compilation, the compiler checks for errors such as syntax errors and identifies any warnings or potential issues with the code. If there are no errors, it generates an executable file that can be run on a computer.

On the other hand, debugging refers to finding and fixing problems in existing code. It involves running software tools like debuggers that allow developers to step through their programs line by line while tracking variables’ values at each point until they identify where something went wrong.

While both processes deal with identifying coding issues, debugging occurs after compilation because you need compiled code before you can initiate debugging procedures. Debugging may include modifying your source files based on feedback from your debugger tool which then requires recompiling again.

Understanding these differences is fundamental since it helps programmers identify when they need to compile or debug their codes based on their needs during development stages of software creation.

Why is it important to know the difference between debugging and compiling?

Knowing the difference between debugging and compiling is crucial for any developer or programmer. Debugging involves identifying and fixing errors in the code, while compiling involves converting source code into machine-readable format.

Debugging helps to ensure that the software works as expected by finding and removing any issues in the code. Without proper debugging, a program may not function correctly, leading to frustration and lost productivity.

On the other hand, compiling is essential because it translates human-readable code into machine-readable language that can be executed by computers. Compiling also optimizes the performance of a program by reducing its size and making it run faster.

Understanding these differences can help developers identify where an error occurs in their code during runtime instead of when they are writing it. This knowledge ultimately leads to more efficient development processes saving time, money, and effort.

Knowing how to debug your programs effectively while understanding what happens during compilation can make all the difference in creating successful applications or software systems.

What is the difference between compiling and executing?

Compiling and executing are two different stages of the software development process. Compiling is the process of converting the human-readable high-level code into machine-readable low-level code, which can be executed by the computer. The compiler takes in source code as input and produces an executable file as output.

On the other hand, executing is running or opening up a program that was compiled previously. It involves loading all necessary files and dependencies from memory into runtime memory for execution.

The main difference between compiling and executing lies in their purpose. Compiling focuses on translating written language to a form that computers understand while executing focuses on performing actions based on instructions generated through compilation.

Another major difference between compiling and executing is their timing. Compiling occurs before execution, where developers convert human-readable code to machine-readable code so that it can be executed later when needed.

In contrast, execution happens after compilation when users run or open up a program created during the earlier stage of compiling. In summary, both processes are crucial to get our programs working efficiently without any errors or bugs.

What is difference between debugging and testing?

Debugging and testing are two distinct processes in software development, although they share some similarities. Testing involves evaluating the functionality of a program or system to ensure that it works as expected and meets certain requirements. This can be done manually or using automated tools.

On the other hand, debugging is the process of identifying and fixing errors or defects in a program’s code that have been discovered through testing or user feedback. Debugging requires specialized knowledge of programming languages, algorithms, data structures, and other technical aspects related to software development.

While testing focuses on verifying correct behavior under different scenarios and inputs, debugging centers around finding reasons for incorrect behavior by examining source code. In general terms, testing helps identify issues while debugging provides solutions for them.

Both processes are crucial components of software development that help maintain quality standards in modern applications. Understanding their differences can help developers streamline their workflows and improve overall productivity when working with complex codes bases.

Featured Image By – Elchinator from Pixabay